-

创建者:

虚拟的现实,上次更新时间:9月 23, 2025 需要 16 分钟阅读时间

1. 前言

x86 环境下的操作可以参考

ARM 环境主要基于信创要求,以下内容参照政务云提供的安装文档进行部署

1.1. 软件环境

| 软件 | 版本 |

|---|---|

| 操作系统 | Kylin Linux Advanced Server V10 (Halberd) SP3 |

| Docker | 28.0.4 |

| Kubernetes | 1.28.15 |

服务器整体规划:

| 角色 | IP | 组件 |

|---|---|---|

| k8s-master1 | 192.168.120.51 | kube-apiserver,kube-controller-manager,kube-scheduler,etcd,kubelet,kube-proxy,docker |

| k8s-master2 | 192.168.120.52 | kube-apiserver,kube-controller-manager,kube-scheduler,etcd,kubelet,kube-proxy,docker |

| k8s-master3 | 192.168.120.53 | kube-apiserver,kube-controller-manager,kube-scheduler,etcd,kubelet,kube-proxy,docker |

| k8s-node1 | 192.168.120.54 | kubelet,kube-proxy,docker , keepalived+ nginx |

| k8s-node2 | 192.168.120.55 | kubelet,kube-proxy,docker, keepalived+ nginx |

| k8s-node3 | 192.168.120.56 | kubelet,kube-proxy,docker, keepalived+ nginx |

| VIP | 192.168.120.50 |

|

2. 操作系统初始化配置

在所有的主机操作系统上执行

2.1. 安装依赖包

yum install -y conntrack-tools conntrack ntpdate ntp ipvsadm ipset iptables curl sysstat libseccomp vim net-tools rpcbind nfs-utils

2.2. 集群 ip 映射

# shell:

ip_addr=$(ip addr show | grep -E 'inet.*brd' | grep -v '127.0.0.1' | awk '{print $2}' | cut -d '/' -f1 | head -n 1)

if [ -z "$ip_addr" ]; then

echo "无法获取有效的IPv4地址"

else

echo "检测到IP地址: $ip_addr"

# 提取IP的最后一段

ip_last_octet=$(echo "$ip_addr" | awk -F '.' '{print $4}')

# 构造目标hostname

target_hostname="host${ip_last_octet}"

# 设置hostname

hostnamectl set-hostname "$target_hostname"

if [ $? -eq 0 ]; then

echo "$target_hostname" > /etc/hostname

fi

fi

cat >> /etc/hosts << EOF

192.168.120.51 host51

192.168.120.52 host52

192.168.120.53 host53

192.168.120.54 host54

192.168.120.55 host55

192.168.120.56 host56

EOF

2.3. 关闭防火墙,关闭 selinux,禁用 swap 分区

systemctl stop firewalld systemctl disable firewalld sed -i 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config && getenforce swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

2.4. 禁用 linux 的透明大页、标准大页

echo never > /sys/kernel/mm/transparent_hugepage/defrag echo never > /sys/kernel/mm/transparent_hugepage/enabled echo 'echo never > /sys/kernel/mm/transparent_hugepage/defrag' >> /etc/rc.local echo 'echo never > /sys/kernel/mm/transparent_hugepage/enabled' >> /etc/rc.local chmod +x /etc/rc.d/rc.local

2.5. 文件数设置

ulimit -SHn 65535 cat >> /etc/security/limits.conf << EOF * soft nofile 655360 * hard nofile 131072 * soft nproc 655350 * hard nproc 655350 * soft memlock unlimited * hard memlock unlimitedd EOF

2.6. linux 内核参数调优

配置内核路由转发及网桥过滤

#将桥接的IPv4流量传递到iptables的链 cat > /etc/sysctl.d/k8s.conf << EOF #开启网桥模式【重要】 net.bridge.bridge-nf-call-iptables=1 #开启网桥模式【重要】 net.bridge.bridge-nf-call-ip6tables=1 net.ipv4.ip_forward=1 net.ipv4.tcp_tw_recycle=0 # 禁止使用 swap 空间,只有当系统 OOM 时才允许使用它 vm.swappiness=0 # 不检查物理内存是否够用 vm.overcommit_memory=1 # 开启 OOM vm.panic_on_oom=0 fs.inotify.max_user_instances=8192 fs.inotify.max_user_watches=1048576 fs.file-max=52706963 fs.nr_open=52706963 #关闭ipv6【重要】 # net.ipv6.conf.all.disable_ipv6=1 # net.netfilter.nf_conntrack_max=2310720 # 下面的内核参数可以解决ipvs模式下长连接空闲超时的问题 net.ipv4.tcp_keepalive_intvl = 30 net.ipv4.tcp_keepalive_probes = 10 net.ipv4.tcp_keepalive_time = 600 EOF sysctl --system

2.7. 加载网桥过滤模块

modprobe br_netfilter cat > /etc/sysconfig/modules/ipvs.modules <<EOF #!/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack modprobe -- br_netfilter EOF chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4 # 查看网桥过滤模块是否成功加载 lsmod | grep br_netfilter # 重新刷新配置 sysctl -p /etc/sysctl.d/k8s.conf # 保证在节点重启后能自动加载所需模块 cat >> /etc/rc.d/rc.local << EOF bash /etc/sysconfig/modules/ipvs.modules EOF chmod +x /etc/rc.d/rc.local

2.8. 时间同步

yum install ntpdate -y #ntpdate time.windows.com ntpdate ntp1.aliyun.com # 或者配置crontab执行: # `crontab -e` # 0 */1 * * * /usr/sbin/ntpdate ntp1.aliyun.com echo "0 */1 * * * /usr/sbin/ntpdate ntp1.aliyun.com" >> /var/spool/cron/root

reboot 重启虚拟机,配置生效。

3. 部署 Etcd 集群

3.1. 准备证书

cfssl 是一个开源的证书管理工具,使用 json 文件生成证书,相比 openssl 更方便使用,找任意一台服务器操作,这里用 Master01 节点。

Master01 节点上生成证书

1.获取 cfssl 工具

mkdir -p /data/k8s-work cd /data/k8s-work # 把下载好的cfssl工具上传到服务器 [root@host51 k8s-work]# ls -l 总用量 25164 -rw-r--r-- 1 root root 11534488 8月 13 10:22 cfssl_1.6.5_linux_arm64 -rw-r--r-- 1 root root 8126616 8月 13 10:37 cfssl-certinfo_1.6.5_linux_arm64 -rw-r--r-- 1 root root 6095000 8月 13 10:36 cfssljson_1.6.5_linux_arm64 [root@host51 k8s-work]# [root@host51 k8s-work]# chmod +x cfssl* [root@host51 k8s-work]# [root@host51 k8s-work]# ls -l 总用量 25164 -rwxr-xr-x 1 root root 11534488 8月 13 10:22 cfssl_1.6.5_linux_arm64 -rwxr-xr-x 1 root root 8126616 8月 13 10:37 cfssl-certinfo_1.6.5_linux_arm64 -rwxr-xr-x 1 root root 6095000 8月 13 10:36 cfssljson_1.6.5_linux_arm64 [root@host51 k8s-work]# mv cfssl_1.6.5_linux_arm64 /usr/local/bin/cfssl mv cfssl-certinfo_1.6.5_linux_arm64 /usr/local/bin/cfssl-certinfo mv cfssljson_1.6.5_linux_arm64 /usr/local/bin/cfssljson cfssl version

2.自签证书颁发机构(CA)

cd /data/k8s-work/

# 配置ca证书请求文件

cat > ca-csr.json <<"EOF"

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

],

"ca": {

"expiry": "87600h"

}

}

EOF

# 创建ca证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

# 配置ca证书策略

cfssl print-defaults config > ca-config.json

cat > ca-config.json <<"EOF"

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

3.使用自签 CA 签发 Etcd HTTPS 证书

# 创建证书申请文件:

cat > etcd-csr.json << "EOF"

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.120.51",

"192.168.120.52",

"192.168.120.53",

"192.168.120.151",

"192.168.120.152"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

# 生成证书: etcd.csr、etcd-key.pem、etcd.pem

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

注:上述文件hosts字段中IP为所有etcd节点的集群内部通信IP,一个都不能少!为了方便后期扩容预留了2个临时IP

3.2. 部署 Etcd 集群

https://github.com/etcd-io/etcd/releases(版本:3.5.21)

以下在 master1上操作,为简化操作,待会将master1 生成的所有文件拷贝到 master2 和节点 master3。

tar zxvf etcd-v3.5.21-linux-arm64.tar.gz

mv etcd-v3.5.21-linux-arm64/{etcd,etcdctl} /usr/local/bin

[root@host51 tmp]# etcdctl version

etcdctl version: 3.5.21

API version: 3.5

# k8s-master1 etcd 配置

mkdir -p /etc/etcd/ssl /var/lib/etcd/default.etcd

cd /data/k8s-work/

cp ca*.pem /etc/etcd/ssl

cp etcd*.pem /etc/etcd/ssl

cat > /etc/etcd/etcd.conf << "EOF"

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.120.51:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.120.51:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.120.51:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.120.51:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.120.51:2380,etcd2=https://192.168.120.52:2380,etcd3=https://192.168.120.53:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

说明:

- ETCD_NAME:节点名称,集群中唯一

- ETCD_DATA_DIR:数据目录

- ETCD_LISTEN_PEER_URLS:集群通信监听地址

- ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

- ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

- ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

- ETCD_INITIAL_CLUSTER:集群节点地址

- ETCD_INITIAL_CLUSTER_TOKEN:集群Token

- ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

1.systemd 管理 etcd

cat > /usr/lib/systemd/system/etcd.service << 'EOF' [Unit] Description=Etcd Server After=network.target After=network-online.target Wants=network-online.target [Service] Type=notify Environment="ETCD_UNSUPPORTED_ARCH=arm64" EnvironmentFile=/etc/etcd/etcd.conf ExecStart=/usr/local/bin/etcd \ --cert-file=/etc/etcd/ssl/etcd.pem \ --key-file=/etc/etcd/ssl/etcd-key.pem \ --trusted-ca-file=/etc/etcd/ssl/ca.pem \ --peer-cert-file=/etc/etcd/ssl/etcd.pem \ --peer-key-file=/etc/etcd/ssl/etcd-key.pem \ --peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \ --peer-client-cert-auth \ --client-cert-auth --logger=zap Restart=on-failure LimitNOFILE=65536 RestartSec=5 [Install] WantedBy=multi-user.target EOF

因为是arm环境,需要加上Environment="ETCD_UNSUPPORTED_ARCH=arm64"

2.将上面 master1 所有生成的文件拷贝到 master2 和 master3,修改etcd.conf文件

ssh root@host52 "mkdir -p /etc/etcd/ssl/ && mkdir -p /var/lib/etcd/default.etcd"

scp -rp /etc/etcd/* root@host52:/etc/etcd/

scp -r /usr/lib/systemd/system/etcd.service root@host52:/usr/lib/systemd/system/

scp -r /usr/local/bin/{etcd,etcdctl} root@host52:/usr/local/bin/

ssh root@host53 "mkdir -p /etc/etcd/ssl/ && mkdir -p /var/lib/etcd/default.etcd"

scp -rp /etc/etcd/* root@host53:/etc/etcd/

scp -r /usr/lib/systemd/system/etcd.service root@host53:/usr/lib/systemd/system/

scp -r /usr/local/bin/{etcd,etcdctl} root@host53:/usr/local/bin/

# 修改etcd.conf文件

# k8s-master2 etcd配置

cat > /etc/etcd/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.120.52:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.120.52:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.120.52:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.120.52:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.120.51:2380,etcd2=https://192.168.120.52:2380,etcd3=https://192.168.120.53:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

# k8s-master3 etcd配置

cat > /etc/etcd/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd3"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.120.53:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.120.53:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.120.53:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.120.53:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.120.51:2380,etcd2=https://192.168.120.52:2380,etcd3=https://192.168.120.53:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

# 启动并设置开机启动

systemctl daemon-reload

systemctl restart etcd

systemctl enable etcd

systemctl status etcd

3.查看集群状态

[root@host51 k8s-work]# etcdctl member list 1bbc501e55ccdcbc, started, etcd3, https://192.168.120.53:2380, https://192.168.120.53:2379, false c2bbef323a613a8d, started, etcd1, https://192.168.120.51:2380, https://192.168.120.51:2379, false d38d45c2041ce6eb, started, etcd2, https://192.168.120.52:2380, https://192.168.120.52:2379, fals [root@host51 bin]# [root@host51 k8s-work]# etcdctl member list -w table +------------------+---------+-------+-----------------------------+-----------------------------+------------+ | ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER | +------------------+---------+-------+-----------------------------+-----------------------------+------------+ | 1bbc501e55ccdcbc | started | etcd3 | https://192.168.120.53:2380 | https://192.168.120.53:2379 | false | | c2bbef323a613a8d | started | etcd1 | https://192.168.120.51:2380 | https://192.168.120.51:2379 | false | | d38d45c2041ce6eb | started | etcd2 | https://192.168.120.52:2380 | https://192.168.120.52:2379 | false | +------------------+---------+-------+-----------------------------+-----------------------------+------------+ [root@host51 k8s-work]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints="https://192.168.120.51:2379,https://192.168.120.52:2379,https://192.168.120.53:2379" endpoint health +-----------------------------+--------+-------------+-------+ | ENDPOINT | HEALTH | TOOK | ERROR | +-----------------------------+--------+-------------+-------+ | https://192.168.120.51:2379 | true | 9.879548ms | | | https://192.168.120.52:2379 | true | 9.925658ms | | | https://192.168.120.53:2379 | true | 10.123852ms | | +-----------------------------+--------+-------------+-------+ [root@host51 k8s-work]# # endpoint status # endpoint health

4. 安装 Docker 和 cri-dockerd

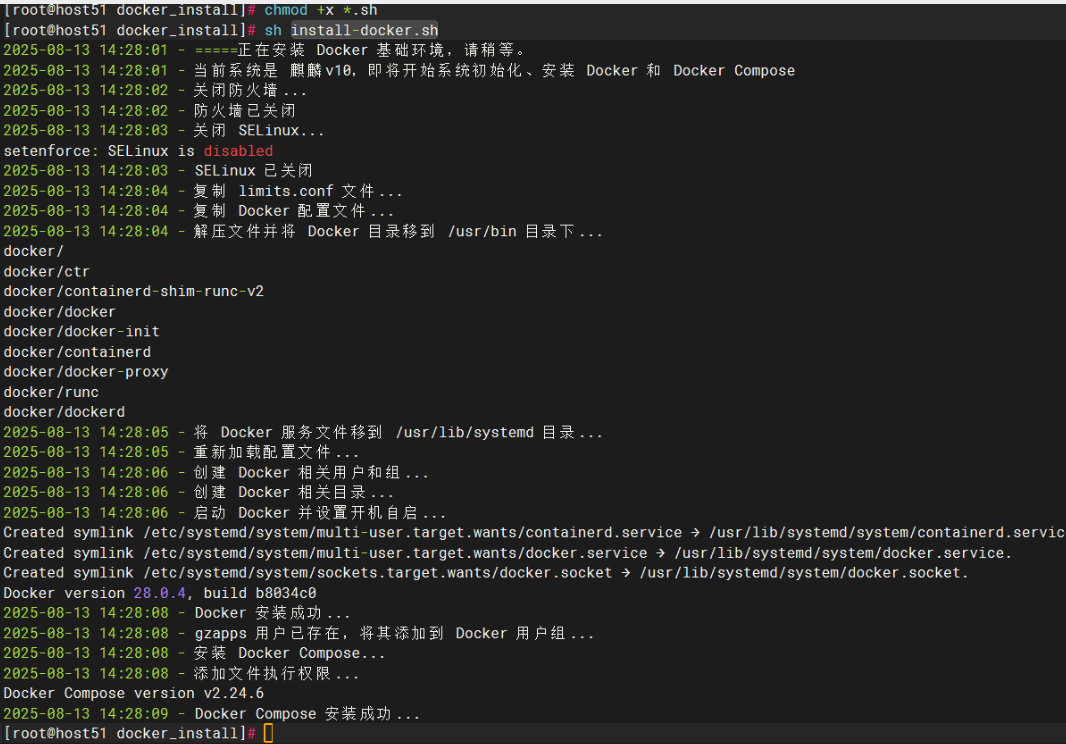

4.1. 部署 docker

所有机器,自行部署,省略

4.2. 部署 cri-dockerd

所有机器,自行安装

wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.19/cri-dockerd-0.3.19.arm64.tgz tar zxvf cri-dockerd-0.3.19.arm64.tgz mv cri-dockerd/cri-dockerd /usr/bin/ ls -l /usr/bin/cri-dockerd # 创建配置文件 cat >/usr/lib/systemd/system/cri-docker.service << "EOF" [Unit] Description=CRI Interface for Docker Application Container Engine Documentation=https://docs.mirantis.com After=network-online.target firewalld.service docker.service Wants=network-online.target [Service] Type=notify ExecStart=/usr/bin/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.9 ExecReload=/bin/kill -s HUP $MAINPID TimeoutSec=0 RestartSec=2 Restart=always [Install] WantedBy=multi-user.target EOF # 启动并设置开机启动 systemctl daemon-reload systemctl start cri-docker systemctl enable cri-docker

5. 部署负载均衡

kube-apiserver 是无状态的,通过 nginx 进行代理访问,从而保证服务可用性

nginx 做反向代理,后端连接所有的 kube-apiserver 实例,并提供健康检查和负载均衡功能;keepalived 提供 kube-apiserver 对外服务的VIP;

nginx 监听的端口16443需要与 kube-apiserver 的端口6443不同,避免冲突。

5.1. nginx 安装和配置,主从服务都需要安装

直接使用 rpm 包部署,麒麟V10 直接下载 NGINX 的 Centos8 的 rpm 包就行

dnf -y install nginx-1.28.0-1.el8.ngx.aarch64.rpm

nginx 配置

vi /etc/nginx/nginx.conf 新增如下信息:

stream {

# 添加socket转发的代理

upstream socket_proxy {

hash $remote_addr consistent;

# 转发的目的地址和端口

server 192.168.120.51:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.120.52:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.120.53:6443 weight=5 max_fails=3 fail_timeout=30s;

}

# 提供转发的服务,即访问localhost:6443,会跳转至代理socket_proxy指定的转发地址

server {

listen 6443;

proxy_connect_timeout 1s;

proxy_timeout 3s;

proxy_pass socket_proxy;

}

}

配置开机自启服务

# rm -f /etc/nginx/conf.d/default.conf systemctl start nginx && systemctl enable nginx

5.2. keepalived 安装和配置

1.安装keepalived

yum install -y keepalived

2.修改配置文件

cp /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bak

vi /etc/keepalived/keepalived.conf

# kd01

cat > /etc/keepalived/keepalived.conf << "EOF"

global_defs {

router_id kd01

}

vrrp_script check_run {

script "/etc/keepalived/check_web.sh"

interval 5

weight 2

}

vrrp_instance VI_1 {

state MASTER

interface enp0s11

virtual_router_id 51

priority 100

advert_int 2

nopreempt

authentication {

auth_type PASS

auth_pass 2222

}

unicast_src_ip 192.168.120.54

unicast_peer {

192.168.120.55

192.168.120.56

}

virtual_ipaddress {

192.168.120.50

}

track_script {

check_run

}

}

EOF

# kd02

cat > /etc/keepalived/keepalived.conf << "EOF"

global_defs {

router_id kd02

}

vrrp_script check_run {

script "/etc/keepalived/check_web.sh"

interval 5

weight 2

}

vrrp_instance VI_1 {

state BACKUP

interface enp0s11

virtual_router_id 51

priority 90

advert_int 2

nopreempt

authentication {

auth_type PASS

auth_pass 2222

}

unicast_src_ip 192.168.120.55

unicast_peer {

192.168.120.54

192.168.120.56

}

virtual_ipaddress {

192.168.120.50

}

track_script {

check_run

}

}

EOF

# kd03

cat > /etc/keepalived/keepalived.conf << "EOF"

global_defs {

router_id kd03

}

vrrp_script check_run {

script "/etc/keepalived/check_web.sh"

interval 5

weight 2

}

vrrp_instance VI_1 {

state BACKUP

interface enp0s11

virtual_router_id 51

priority 80

advert_int 2

nopreempt

authentication {

auth_type PASS

auth_pass 2222

}

unicast_src_ip 192.168.120.56

unicast_peer {

192.168.120.54

192.168.120.55

}

virtual_ipaddress {

192.168.120.50

}

track_script {

check_run

}

}

EOF

3.编写 nginx 监控脚本

如果nginx服务停止,keepalived服务也停止,并切换到备主机。脚本如下:/etc/keepalived/check_web.sh

cat > /etc/keepalived/check_web.sh << "EOF"

#!/bin/bash

num=$(ps -C nginx --no-header | wc -l)

if [ $num -eq 0 ]; then

systemctl restart nginx

sleep 10

num=$(ps -C nginx --no-header | wc -l)

if [ $num -eq 0 ]; then

systemctl stop keepalived

fi

fi

EOF

chmod +x /etc/keepalived/check_web.sh

4.配置开机自启 keepalived 服务

systemctl start keepalived systemctl enable keepalived systemctl status keepalived

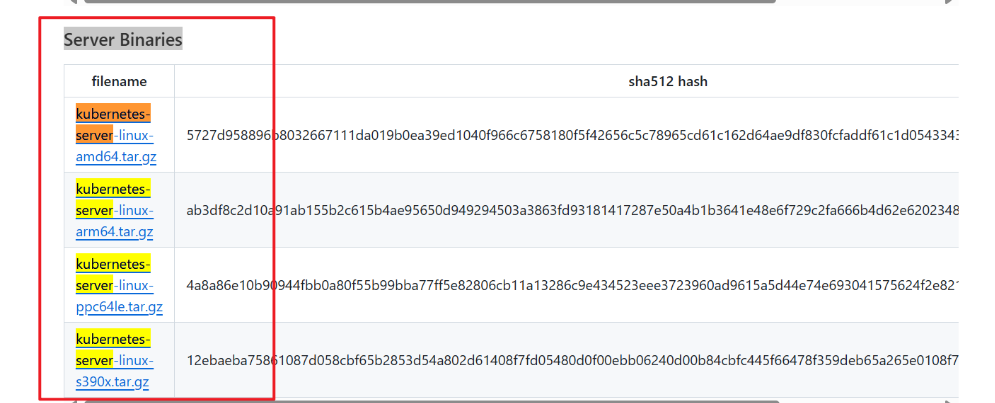

6. kubernetes 集群部署

- https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.28.md#downloads-for-v1281

注意选择 arm 版本

6.1. 下载并解压安装包

wget https://dl.k8s.io/v1.28.15/kubernetes-server-linux-arm64.tar.gz tar zxvf kubernetes-server-linux-arm64.tar.gz cd kubernetes/server/bin/ cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/ mkdir -p /etc/kubernetes/ssl mkdir -p /var/log/kubernetes # Kubernetes软件分发 scp kube-apiserver kube-controller-manager kube-scheduler kubectl host52:/usr/local/bin/ scp kube-apiserver kube-controller-manager kube-scheduler kubectl host53:/usr/local/bin/

6.2. 部署 kube-apiserver

1.创建 apiserver 证书请求文件

cd /data/k8s-work/

cat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.120.50",

"192.168.120.51",

"192.168.120.52",

"192.168.120.53",

"192.168.120.151",

"192.168.120.152",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

# 生成apiserver证书及token文件

# 生成kube-apiserver.csr、kube-apiserver-key.pem、kube-apiserver.pem

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

#生成token.csv

cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

注:上述文件 hosts 字段中 IP 为所有 Master/LB/VIP IP,一个都不能少!为了方便后期扩容可以多写几个预留的 IP。

2.创建配置文件

cat > /etc/kubernetes/kube-apiserver.conf << "EOF" KUBE_APISERVER_OPTS=" \ --bind-address=192.168.120.51 \ --advertise-address=192.168.120.51 \ --secure-port=6443 \ --allow-privileged=true \ --authorization-mode=Node,RBAC \ --anonymous-auth=false \ --runtime-config=api/all=true \ --enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \ --enable-bootstrap-token-auth \ --token-auth-file=/etc/kubernetes/token.csv \ --service-cluster-ip-range=10.96.0.0/16 \ --service-node-port-range=30000-32767 \ --tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \ --tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \ --client-ca-file=/etc/kubernetes/ssl/ca.pem \ --kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \ --kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \ --service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \ --service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \ --service-account-issuer=api \ --etcd-servers=https://192.168.120.51:2379,https://192.168.120.52:2379,https://192.168.120.53:2379 \ --etcd-cafile=/etc/etcd/ssl/ca.pem \ --etcd-certfile=/etc/etcd/ssl/etcd.pem \ --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \ --enable-aggregator-routing=true \ --requestheader-allowed-names=kubernetes \ --requestheader-extra-headers-prefix=X-Remote-Extra- \ --requestheader-group-headers=X-Remote-Group \ --requestheader-username-headers=X-Remote-User \ --apiserver-count=3 \ --event-ttl=1h \ --audit-log-maxage=30 \ --audit-log-maxbackup=3 \ --audit-log-maxsize=100 \ --audit-log-path=/var/log/kube-apiserver-audit.log \ \ --v=4" EOF

3.证书拷贝

cd /data/k8s-work/ cp ca*.pem /etc/kubernetes/ssl/ cp kube-apiserver*.pem /etc/kubernetes/ssl/ cp token.csv /etc/kubernetes/

4.创建 apiserver 服务管理配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << "EOF" [Unit] Description=Kubernetes API Server Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS Restart=on-failure RestartSec=5 Type=notify LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

5.同步文件到集群 master 节点

scp /etc/kubernetes/ssl/ca*.pem host52:/etc/kubernetes/ssl scp /etc/kubernetes/ssl/ca*.pem host53:/etc/kubernetes/ssl scp /etc/kubernetes/ssl/kube-apiserver*.pem host52:/etc/kubernetes/ssl scp /etc/kubernetes/ssl/kube-apiserver*.pem host53:/etc/kubernetes/ssl scp /etc/kubernetes/token.csv host52:/etc/kubernetes scp /etc/kubernetes/token.csv host53:/etc/kubernetes #需要修改为对应主机的ip地址 scp /etc/kubernetes/kube-apiserver.conf host52:/etc/kubernetes/kube-apiserver.conf scp /etc/kubernetes/kube-apiserver.conf host53:/etc/kubernetes/kube-apiserver.conf scp /usr/lib/systemd/system/kube-apiserver.service host52:/usr/lib/systemd/system/kube-apiserver.service scp /usr/lib/systemd/system/kube-apiserver.service host53:/usr/lib/systemd/system/kube-apiserver.service

6.启动服务

# 启动:

systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

# 验证:

curl --insecure https://192.168.120.51:6443/

curl --insecure https://192.168.120.52:6443/

curl --insecure https://192.168.120.53:6443/

curl --insecure https://192.168.120.50:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

6.3. 部署 kube-controller-manager

1.创建 kube-controller-manager 证书请求文件

cd /data/k8s-work

cat > kube-controller-manager-csr.json << "EOF"

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.120.51",

"192.168.120.52",

"192.168.120.53"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

EOF

2.创建 kube-controller-manager 证书文件

- kube-controller-manager.csr

- kube-controller-manager-csr.json

- kube-controller-manager-key.pem

- kube-controller-manager.pem

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

3.创建 kube-controller-manager 的 kube-controller-manager.kubeconfig

kubectl config set-cluster kubernetes \ --certificate-authority=./ca.pem --embed-certs=true \ --server=https://192.168.120.50:6443 \ --kubeconfig=kube-controller-manager.kubeconfig kubectl config set-credentials system:kube-controller-manager \ --client-certificate=./kube-controller-manager.pem \ --client-key=./kube-controller-manager-key.pem \ --embed-certs=true \ --kubeconfig=kube-controller-manager.kubeconfig kubectl config set-context system:kube-controller-manager \ --cluster=kubernetes --user=system:kube-controller-manager \ --kubeconfig=kube-controller-manager.kubeconfig kubectl config use-context system:kube-controller-manager \ --kubeconfig=kube-controller-manager.kubeconfig

4.创建 kube-controller-manager 配置文件

cat > kube-controller-manager.conf << "EOF" KUBE_CONTROLLER_MANAGER_OPTS=" \ --secure-port=10257 \ --bind-address=127.0.0.1 \ --kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \ --service-cluster-ip-range=10.96.0.0/16 \ --cluster-name=kubernetes \ --cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \ --cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \ --allocate-node-cidrs=true \ --cluster-cidr=10.244.0.0/16 \ --root-ca-file=/etc/kubernetes/ssl/ca.pem \ --service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \ --leader-elect=true \ --feature-gates=RotateKubeletServerCertificate=true \ --controllers=*,bootstrapsigner,tokencleaner \ --tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \ --tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \ --use-service-account-credentials=true \ --cluster-signing-duration=87600h0m0s \ --v=2" EOF

5.创建服务启动文件

cat > /usr/lib/systemd/system/kube-controller-manager.service << "EOF" [Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=/etc/kubernetes/kube-controller-manager.conf ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS Restart=on-failure RestartSec=5 [Install] WantedBy=multi-user.target EOF

6.同步文件到集群 master 节点

cp kube-controller-manager*.pem /etc/kubernetes/ssl/ cp kube-controller-manager.kubeconfig /etc/kubernetes/ cp kube-controller-manager.conf /etc/kubernetes/ scp kube-controller-manager*.pem host52:/etc/kubernetes/ssl/ scp kube-controller-manager*.pem host53:/etc/kubernetes/ssl/ scp kube-controller-manager.kubeconfig kube-controller-manager.conf host52:/etc/kubernetes/ scp kube-controller-manager.kubeconfig kube-controller-manager.conf host53:/etc/kubernetes/ scp /usr/lib/systemd/system/kube-controller-manager.service host52:/usr/lib/systemd/system/ scp /usr/lib/systemd/system/kube-controller-manager.service host53:/usr/lib/systemd/system/

7.启动服务

systemctl daemon-reload systemctl start kube-controller-manager systemctl enable --now kube-controller-manager systemctl status kube-controller-manager

6.4. 部署 kube-scheduler

1.创建 kube-scheduler 证书请求文件

cd /data/k8s-work

cat > kube-scheduler-csr.json << "EOF"

{

"CN": "system:kube-scheduler",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

EOF

2.生成 kube-scheduler 证书

- kube-scheduler.csr

- kube-scheduler-csr.json

- kube-scheduler-key.pem

- kube-scheduler.pem

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

3.创建 kube-scheduler 的 kubeconfig

kubectl config set-cluster kubernetes \ --certificate-authority=./ca.pem \ --embed-certs=true \ --server=https://192.168.120.50:6443 \ --kubeconfig=kube-scheduler.kubeconfig kubectl config set-credentials system:kube-scheduler \ --client-certificate=./kube-scheduler.pem \ --client-key=./kube-scheduler-key.pem \ --embed-certs=true \ --kubeconfig=kube-scheduler.kubeconfig kubectl config set-context system:kube-scheduler \ --cluster=kubernetes \ --user=system:kube-scheduler \ --kubeconfig=kube-scheduler.kubeconfig kubectl config use-context system:kube-scheduler \ --kubeconfig=kube-scheduler.kubeconfig

4.创建服务配置文件

cat > kube-scheduler.conf << EOF KUBE_SCHEDULER_OPTS="--leader-elect=true \ --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \ --bind-address=127.0.0.1 \ --v=2" EOF

5.创建服务启动配置文件

cat > /usr/lib/systemd/system/kube-scheduler.service << "EOF" [Unit] Description=Kubernetes Scheduler Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS Restart=on-failure RestartSec=5 [Install] WantedBy=multi-user.target EOF

6.同步文件至集群 master 节点

cp kube-scheduler*.pem /etc/kubernetes/ssl/ cp kube-scheduler.kubeconfig /etc/kubernetes/ cp kube-scheduler.conf /etc/kubernetes/ scp kube-scheduler*.pem host52:/etc/kubernetes/ssl/ scp kube-scheduler*.pem host53:/etc/kubernetes/ssl/ scp kube-scheduler.kubeconfig kube-scheduler.conf host52:/etc/kubernetes/ scp kube-scheduler.kubeconfig kube-scheduler.conf host53:/etc/kubernetes/ scp /usr/lib/systemd/system/kube-scheduler.service host52:/usr/lib/systemd/system/ scp /usr/lib/systemd/system/kube-scheduler.service host53:/usr/lib/systemd/system/

7.启动服务

systemctl daemon-reload systemctl start kube-scheduler systemctl enable --now kube-scheduler systemctl status kube-scheduler

6.5. 部署kubectl

1.创建 kubectl 证书请求文件

cd /data/k8s-work

cat > admin-csr.json << "EOF"

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "system"

}

]

}

EOF

# 生成证书文件

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

# 复制文件到指定目录

cp admin*.pem /etc/kubernetes/ssl/

2.生成 kube.config 配置文件

kube.config 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书

cd /etc/kubernetes/ssl/ # vip kubectl config set-cluster kubernetes \ --certificate-authority=./ca.pem \ --embed-certs=true \ --server=https://192.168.120.50:6443 \ --kubeconfig=kube.config kubectl config set-credentials cluster-admin \ --client-certificate=./admin.pem \ --client-key=./admin-key.pem \ --embed-certs=true \ --kubeconfig=kube.config kubectl config set-context kubernetes \ --cluster=kubernetes \ --user=cluster-admin \ --kubeconfig=kube.config kubectl config use-context kubernetes --kubeconfig=kube.config

3.准备 kubectl 配置文件并进行角色绑定

mkdir ~/.kube cp kube.config ~/.kube/config kubectl create clusterrolebinding kube-apiserver:kubelet-apis \ --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/config kubectl create clusterrolebinding kubelet-bootstrap \ --clusterrole=system:node-bootstrapper \ --user=kubelet-bootstrap kubectl create clusterrolebinding node-client-auto-approve-csr --clusterrole=system:certificates.k8s.io:certificatesigningrequests:nodeclient \ --user=kubelet-bootstrap

4.查看集群状态

#查看集群信息 kubectl cluster-info #查看集群组件状态 kubectl get componentstatuses #查看命名空间中资源对象 kubectl get all --all-namespaces

5.同步 kubectl 配置文件到集群其它 master 节点

#k8s-master2节点上,创建文件夹 mkdir /root/.kube #k8s-master3节点上,创建文件夹 mkdir /root/.kube #把配置文件同步过去 scp /root/.kube/config host52:/root/.kube/config scp /root/.kube/config host53:/root/.kube/config

7. 工作节点(worker node)部署

7.1. 部署kubelet

1.创建配置文件

cd /data/k8s-work cat > kubelet.conf << "EOF" KUBELET_OPTS=" \ --kubeconfig=/etc/kubernetes/kubelet.kubeconfig \ --bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \ --config=/etc/kubernetes/kubelet.yml \ --cert-dir=/etc/kubernetes/ssl \ --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.9 \ --container-runtime-endpoint=unix:///var/run/cri-dockerd.sock \ --v=2" EOF

2.创建 kubelet 配置文件

cat > kubelet.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: systemd

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

clusterDNS:

- 10.96.0.2

clusterDomain: cluster.local

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

serializeImagePulls: false

hairpinMode: promiscuous-bridge

failSwapOn: true

EOF

3.创建 kubelet-bootstrap.kubeconfig

cd /data/k8s-work

# 与token.csv里保持一致

#BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

BOOTSTRAP_TOKEN=7c569c3a779eaf221d3bf3c2611daac1

kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=https://192.168.120.50:6443 \

--kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl create clusterrolebinding cluster-system-anonymous \

--clusterrole=cluster-admin --user=kubelet-bootstrap

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

4.创建 kubelet 服务启动管理文件

cat > kubelet.service << "EOF" [Unit] Description=Kubernetes Kubelet Documentation=https://github.com/kubernetes/kubernetes After=docker.service Requires=docker.service [Service] EnvironmentFile=/etc/kubernetes/kubelet.conf ExecStart=/usr/local/bin/kubelet $KUBELET_OPTS Restart=on-failure LimitNOFILE=65536 RestartSec=5 [Install] WantedBy=multi-user.target EOF

5.同步文件到集群节点

k8s-master1上生成的文件同步到 node 节点

scp kubelet-bootstrap.kubeconfig kubelet.conf kubelet.yml root@host52:/etc/kubernetes/ scp kubelet-bootstrap.kubeconfig kubelet.conf kubelet.yml root@host53:/etc/kubernetes/ scp kubelet-bootstrap.kubeconfig kubelet.conf kubelet.yml root@host54:/etc/kubernetes/ scp kubelet-bootstrap.kubeconfig kubelet.conf kubelet.yml root@host55:/etc/kubernetes/ scp kubelet-bootstrap.kubeconfig kubelet.conf kubelet.yml root@host56:/etc/kubernetes/ scp kubelet.service root@host52:/usr/lib/systemd/system/kubelet.service scp kubelet.service root@host53:/usr/lib/systemd/system/kubelet.service scp kubelet.service root@host54:/usr/lib/systemd/system/kubelet.service scp kubelet.service root@host55:/usr/lib/systemd/system/kubelet.service scp kubelet.service root@host56:/usr/lib/systemd/system/kubelet.service scp ca.pem root@host54:/etc/kubernetes/ssl scp ca.pem root@host55:/etc/kubernetes/ssl scp ca.pem root@host56:/etc/kubernetes/ssl # 把二进制文件分发到node节点 cd /tmp/kubernetes/server/bin/ scp kubelet kube-scheduler kube-proxy root@host52:/usr/local/bin/ scp kubelet kube-scheduler kube-proxy root@host53:/usr/local/bin/ scp kubelet kube-scheduler kube-proxy root@host54:/usr/local/bin/ scp kubelet kube-scheduler kube-proxy root@host55:/usr/local/bin/ scp kubelet kube-scheduler kube-proxy root@host56:/usr/local/bin/

6.启动服务

systemctl daemon-reload systemctl enable --now kubelet systemctl status kubelet # master 查看 [root@host51 k8s-work]# kubectl get nodes NAME STATUS ROLES AGE VERSION host51 NotReady <none> 2m59s v1.28.15 host52 NotReady <none> 9s v1.28.15 host53 NotReady <none> 5s v1.28.15 host54 NotReady <none> 13m v1.28.15 host55 NotReady <none> 12m v1.28.15 host56 NotReady <none> 12m v1.28.15

启动 kubelet 后,在/etc/kubernetes目录下会自动生成证书配置文件 kubelet.kubeconfig。

在 ssl 中会看到自动签发的证书 kubelet-client-2025-08-14-08-47-30.pem、kubelet.crt、kubelet.key

后续如果节点异常,重新签发证书,需要把ssl里面的证书先删除,否则会看到节点加入集群失败

[root@host54 kubernetes]# tree

.

├── kubelet-bootstrap.kubeconfig

├── kubelet.json

├── kubelet.kubeconfig

└── ssl

├── ca.pem

├── kubelet-client-2025-08-14-08-47-30.pem

├── kubelet-client-current.pem -> /etc/kubernetes/ssl/kubelet-client-2025-08-14-08-47-30.pem

├── kubelet.crt

└── kubelet.key

1 directory, 8 files

[root@host54 kubernetes]#

7.2. 部署kube-proxy

1.创建服务配置文件

cd /data/k8s-work cat > kube-proxy.yaml << "EOF" apiVersion: kubeproxy.config.k8s.io/v1alpha1 bindAddress: 0.0.0.0 clientConnection: kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig clusterCIDR: 10.244.0.0/16 healthzBindAddress: 0.0.0.0:10256 kind: KubeProxyConfiguration metricsBindAddress: 0.0.0.0:10249 mode: "ipvs" EOF

2.创建服务启动管理文件

cat > kube-proxy.conf << "EOF" KUBE_PROXY_OPTS=" \ --config=/etc/kubernetes/kube-proxy.yaml \ --v=2" EOF cat > kube-proxy.service << "EOF" [Unit] Description=Kubernetes Kube-Proxy Server Documentation=https://github.com/kubernetes/kubernetes After=network.target [Service] EnvironmentFile=/etc/kubernetes/kube-proxy.conf ExecStart=/usr/local/bin/kube-proxy $KUBE_PROXY_OPTS Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

3.创建 kube-proxy 证书请求文件

cd /data/k8s-work

cat > kube-proxy-csr.json << "EOF"

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

# 生成证书 kube-proxy.pem kube-proxy-key.pem kube-proxy.csr

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

4.创建 kubeconfig 文件

生成:kube-proxy.kubeconfig

kubectl config set-cluster kubernetes \ --certificate-authority=./ca.pem \ --embed-certs=true \ --server=https://192.168.120.50:6443 \ --kubeconfig=kube-proxy.kubeconfig kubectl config set-credentials kube-proxy \ --client-certificate=./kube-proxy.pem \ --client-key=./kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=kube-proxy.kubeconfig kubectl config set-context default \ --cluster=kubernetes --user=kube-proxy \ --kubeconfig=kube-proxy.kubeconfig kubectl config use-context default \ --kubeconfig=kube-proxy.kubeconfig

5.同步文件到集群工作节点主机

cp kube-proxy.yaml kube-proxy.conf kube-proxy.kubeconfig /etc/kubernetes/ cp kube-proxy*pem /etc/kubernetes/ssl cp kube-proxy.service /usr/lib/systemd/system/kube-proxy.service scp kube-proxy.yaml kube-proxy.conf kube-proxy.kubeconfig root@host52:/etc/kubernetes/ scp kube-proxy.yaml kube-proxy.conf kube-proxy.kubeconfig root@host53:/etc/kubernetes/ scp kube-proxy.yaml kube-proxy.conf kube-proxy.kubeconfig root@host54:/etc/kubernetes/ scp kube-proxy.yaml kube-proxy.conf kube-proxy.kubeconfig root@host55:/etc/kubernetes/ scp kube-proxy.yaml kube-proxy.conf kube-proxy.kubeconfig root@host56:/etc/kubernetes/ scp kube-proxy*pem root@host52:/etc/kubernetes/ssl scp kube-proxy*pem root@host53:/etc/kubernetes/ssl scp kube-proxy*pem root@host54:/etc/kubernetes/ssl scp kube-proxy*pem root@host55:/etc/kubernetes/ssl scp kube-proxy*pem root@host56:/etc/kubernetes/ssl scp kube-proxy.service root@host52:/usr/lib/systemd/system/kube-proxy.service scp kube-proxy.service root@host53:/usr/lib/systemd/system/kube-proxy.service scp kube-proxy.service root@host54:/usr/lib/systemd/system/kube-proxy.service scp kube-proxy.service root@host55:/usr/lib/systemd/system/kube-proxy.service scp kube-proxy.service root@host56:/usr/lib/systemd/system/kube-proxy.service

6.服务启动

systemctl daemon-reload systemctl enable --now kube-proxy systemctl status kube-proxy

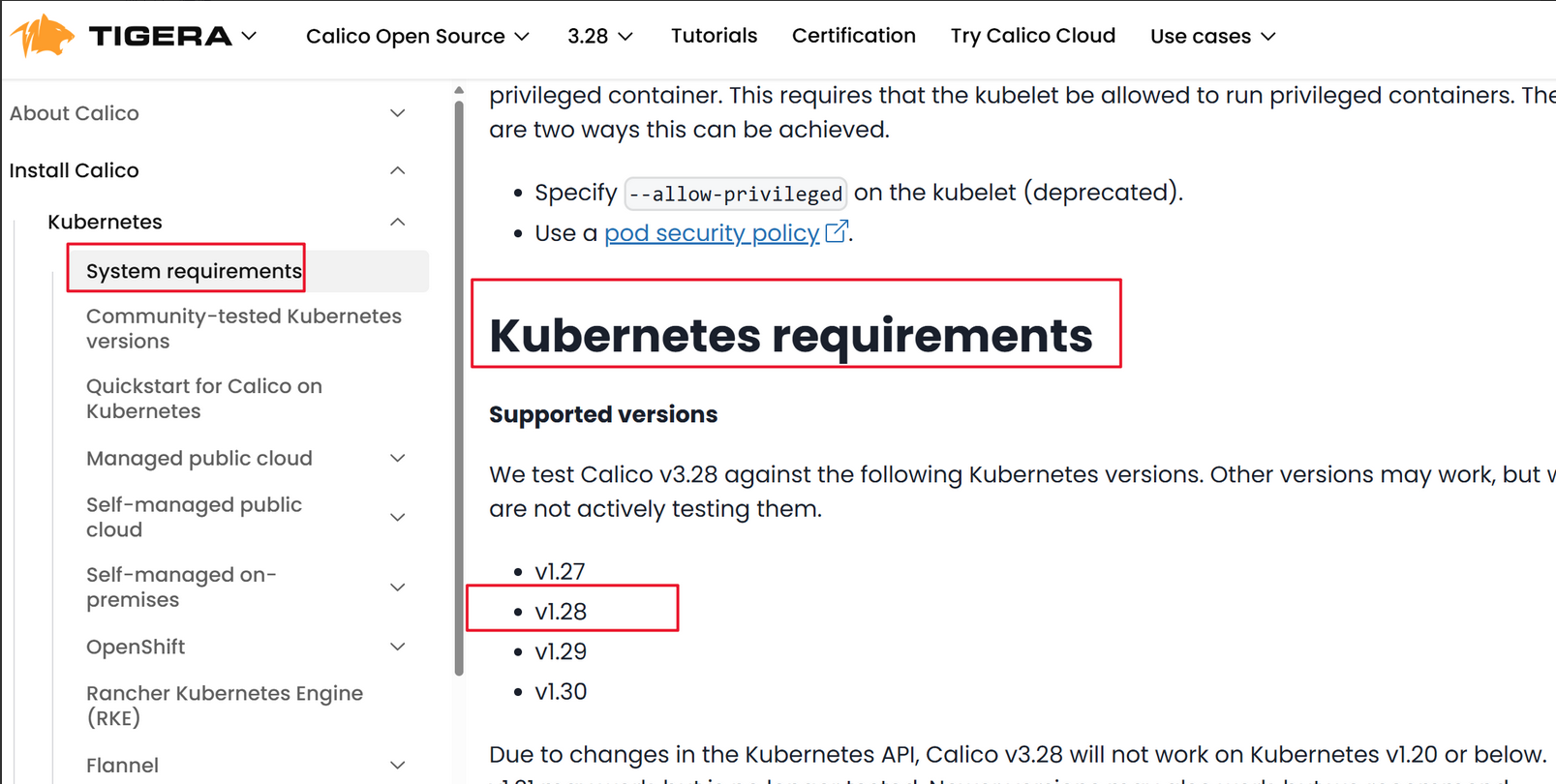

8. 网络组件部署 Calico(部署 CNI 网络)

1.下载文件

在 calico 的官网进行下载对应的 yaml 文件,在 master 节点上创建

# kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.28.5/manifests/tigera-operator.yaml # kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.28.5/manifests/custom-resources.yaml #先使用wget下载后,检查文件正常后在进行部署 wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.5/manifests/tigera-operator.yaml wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.5/manifests/custom-resources.yaml

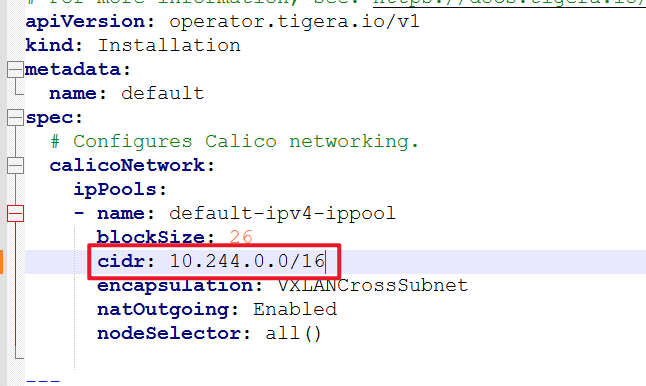

2.修改文件

custom-resources.yaml 文件默认的 pod 网络为 192.168.0.0/16,我们定义的 pod 网络为 10.244.0.0/16,需要修改后再执行。

cidr: 192.168.0.0/16 修改成 cidr: 10.244.0.0/16

8.1. 部署插件

kubectl create -f tigera-operator.yaml # tigera-operator中pod都running后,执行 kubectl create -f custom-resources.yaml kubectl create -f custom-resources.yaml kubectl get pods -n calico-system # 部署好网络插件,Node准备就绪。 [root@host51 ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION host51 Ready <none> 30m v1.28.15 host52 Ready <none> 27m v1.28.15 host53 NotReady <none> 27m v1.28.15 host54 NotReady <none> 40m v1.28.15 host55 Ready <none> 40m v1.28.15 host56 Ready <none> 40m v1.28.15 [root@host51 ~]#

8.2. 授权 apiserver 访问 kubelet

cat > apiserver-to-kubelet-rbac.yaml << "EOF"

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

- pods/log

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

EOF

# 部署yaml

kubectl apply -f apiserver-to-kubelet-rbac.yaml

8.3. 部署 CoreDNS

CoreDNS用于集群内部 Service 名称解析。

cat > coredns.yaml << "EOF"

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

kubernetes.io/os: linux

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values: ["kube-dns"]

topologyKey: kubernetes.io/hostname

containers:

- name: coredns

image: coredns/coredns:1.10.1

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

readinessProbe:

httpGet:

path: /ready

port: 8181

scheme: HTTP

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.96.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP

EOF

# 部署

kubectl apply -f coredns.yaml

#验证dns域名解析是否正常

kubectl get pods -n kube-system

[root@k8s-node1 kubernetes]# dig -t a www.baidu.com @10.96.0.2

8.4. 部署应用验证

测试是否能访问

kubectl create ns test-nginx kubectl create deploy my-nginx --image=nginx:1.28.0 -n test-nginx --dry-run -o yaml >> my-nginx.yaml kubectl apply -f my-nginx.yaml kubectl expose deployment my-nginx --port=80 --target-port=80 --type=NodePort -n test-nginx --dry-run -o yaml >> nginx-svc.yaml kubectl apply -f nginx-svc.yaml [root@host51 ~]# kubectl get svc -n test-nginx NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE my-nginx NodePort 10.96.7.73 <none> 80:30872/TCP 19s [root@host51 ~]# [root@host51 ~]# curl -ivk http://192.168.120.50:30872/ * Trying 192.168.120.50:30872... * Connected to 192.168.120.50 (192.168.120.50) port 30872 (#0) > GET / HTTP/1.1 > Host: 192.168.120.50:30872 > User-Agent: curl/7.71.1 > Accept: */* > * Mark bundle as not supporting multiuse < HTTP/1.1 200 OK HTTP/1.1 200 OK < Server: nginx/1.28.0 Server: nginx/1.28.0

9. 参考

9.1. kube-prometheus-stack 中 grafana-sc-datasources 错误记录

最近 arm 架构部署的二进制 k8s,使用 kube-prometheus-stack 部署全家桶,其他业务正常情况下,就 grafana下 grafana-sc-dashboard 和 grafana-sc-datasources 出现异常,因为是二进制部署 CA 证书都自签名的,导致 pod(k8s-sidecar)出现 CERTIFICATE_VERIFY_FAILED 错误,数据源与大盘图都无法进行正常加载。搞了一下午也没修复,后续也是看 helm 的对应 chart 源码才解决。

{"time": "2025-08-26T07:11:48.733288+00:00", "level": "ERROR", "msg": "MaxRetryError when calling kubernetes: HTTPSConnectionPool(host='10.96.0.1', port=443):Max retries exceeded with url: /api/v1/namespaces/monitoring/configmaps?labelSelector=grafana_dashboard%3D1&timeoutSeconds=60&watch=True(Caused by SSLError(SSLCertVerificationError(1, '[SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: self signed certificate (_ssl.c:992)')))\n"}{"time": "2025-08-26T07:11:48.733467+00:00", "level": "ERROR", "msg": "MaxRetryError when calling kubernetes: HTTPSConnectionPool(host='10.96.0.1', port=443):Max retries exceeded with url: /api/v1/namespaces/monitoring/secrets?labelSelector=grafana_dashboard%3D1&timeoutSeconds=60&watch=True

(Caused by SSLError(SSLCertVerificationError(1, '[SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: self signed certificate (_ssl.c:992)')))\n"

解决方案

通过查看 helm chart 源码,发现直接添加此参数就行。

{{- if .Values.sidecar.skipTlsVerify }}

- name: SKIP_TLS_VERIFY

value: "{{ .Values.sidecar.skipTlsVerify }}"

{{- end }}

在 value.yaml 添加再出现跑即可解决:

grafana: sidecar: skipTlsVerify: true

错误,不生效记录

下午通过各种验证方法都不行其中包括设置env也不生效,升级版本也不行

无效1:

sidecar: args: - --insecure-skip-tls-verify=true

无效2:

sidecar: args: - --kube-ca-file=/var/run/secrets/kubernetes.io/serviceaccount/ca.crt - --kube-token-file=/var/run/secrets/kubernetes.io/serviceaccount/token

无效3:

grafana: sidecar: dashboards: enabled: true env: - name: SKIP_TLS_VERIFY value: "true" extraArgs: - --insecure-skip-tls-verify

无效4:

sidecar: dashboards: enabled: true label: grafana_dashboard extraEnv: - name: KUBERNETES_SKIP_TLS_VERIFY value: "true" - name: KUBERNETES_SERVICE_HOST value: "10.96.0.1" - name: KUBERNETES_SERVICE_PORT value: "443" datasources: enabled: true label: grafana_datasource extraEnv: - name: KUBERNETES_SKIP_TLS_VERIFY value: "true" - name: KUBERNETES_SERVICE_HOST value: "10.96.0.1" - name: KUBERNETES_SERVICE_PORT value: "443"

- 无标签

添加评论